As for transforming the data in the regular expression or modifying the XPath, there are no tools available for you will need to master XPath and regular expression if you want to explore more on Portia.Īccording to my test, there’s no difference in the extraction speed of a Portia scraper running on Scrapinghub cloud and an Octoparse crawler running on my local machine. And you would not know which pages Portia gets its data from as the scraper can't be controlled with any regular expressions. It can't deal with captcha, which is quite common for most web pages. What is the difference between Octoparse and Portia?Īs previously mentioned, Portia can only get data from pages that have the exact same layout, but going between search results and more detailed product description pages is not possible. Portia can't interact with dropdown menus, pop-up windows, infinite scrolling pages, or pagination unless you use external libraries. Check below for a simple example of how Portia crawler works. But still, big sites like Amazon are hard to be navigated this way. To make up for this issue, Portia provides regular expression to narrow down its search. The way Portia gets data can lead to unexpected or unwanted data. Portia will find items that are structured the same as the sample you have created and this step will continue until you either tell it to stop, reach the limit of your ScrapingHub plan or if the software finishes checking every page. Like Octoparse, Portia can automatically detect similar items on any page. Making a crawler in Portia is very similar to that in Octoparse. An extraction task can be set up quickly with only a few steps: open web page - select items - extract data - get data - export data.įor more detailed information you could check out Octoparse Tutorials. Octoparse supports multi-step extractions and eventually combines the data together in one output. Octoparse is also pretty neat by having a workflow showing all the different steps for any extraction task and I did find it useful for sorting out all the logic behind the extraction.įurthermore, the built-in RegEx tool and XPath tool come in handy if one is looking to customize data extracted. Some of the more advanced features worth mentioning include scraping behind a login, select the different options from a dropdown menu, searched based extraction as well as dealing with infinite scrolling, etc. Octoparse, a visual web scraper works by mimic human browsing behaviors and can be instructed to interact with the website in various ways, thus allowing scraping dynamic and more complex websites. With its simple point-and-click UI, extracting data with Octoparse can be rather easy. Professional support, tutorials, community support

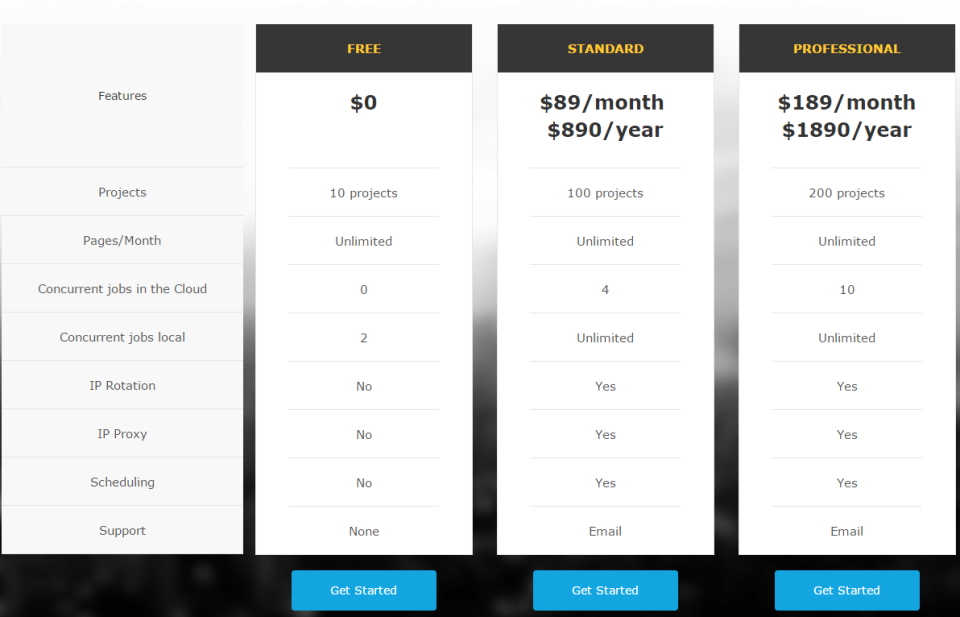

Included in paid plans or manual IP proxy in free plan Hosted on cloud of Octoparse servers if subscribed to Octoparse plans or on local machine with free version Pop-ups, infinite scroll, hover content,drop downs, tabs Variables, loops, conditionals, function calls (via RegEx, XPath) In this article I will put it head to head with Octoparse to see how these two tools compare (check here for another comparison between Octoparse and import.io).ĭesktop app for Windows (available fo MAC with virtual machine)Ĭlicking on pagination links or manually entering the XPath(websites without "Next page" links) So, let's review the best tools available on the market.Portia, one of the platform of Scrapinghub, is a visual web scraping tool. With three types of data extraction tools – batch processing, open-source, and cloud-based tools – you can create a cycle of web scraping and data analysis. Modern data extraction tools are the top robust no-code/low code solutions to support business processes. The only problem is that this method can be used for extracting tables only. With web scraping, you can easily get information saved in an excel sheet. This method may surprise you, but Microsoft Excel software can be a useful tool for data manipulation.

Similar services may be a good option if there is a budget for data extraction. Nevertheless, Python is the top choice because of its simplicity and availability of libraries for developing a web scraper.ĭata service is a professional web service providing research and data extraction according to business requirements. It is possible to quickly build software with any general-purpose programming language like Java, JavaScript, PHP, C, C#, and so on. There are several ways of manual web scraping. If the company has in-house developers, it is possible to build a web scraping pipeline. Manually extracting data from a website (copy/pasting information to a spreadsheet) is time-consuming and difficult when dealing with big data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed